29 April 2024

zoom : https://u-paris.zoom.us/j/85172751178?pwd=Zm5tZm42d0FPN0JHVWFVd3E0MkFoZz09

room 134 (first floor)

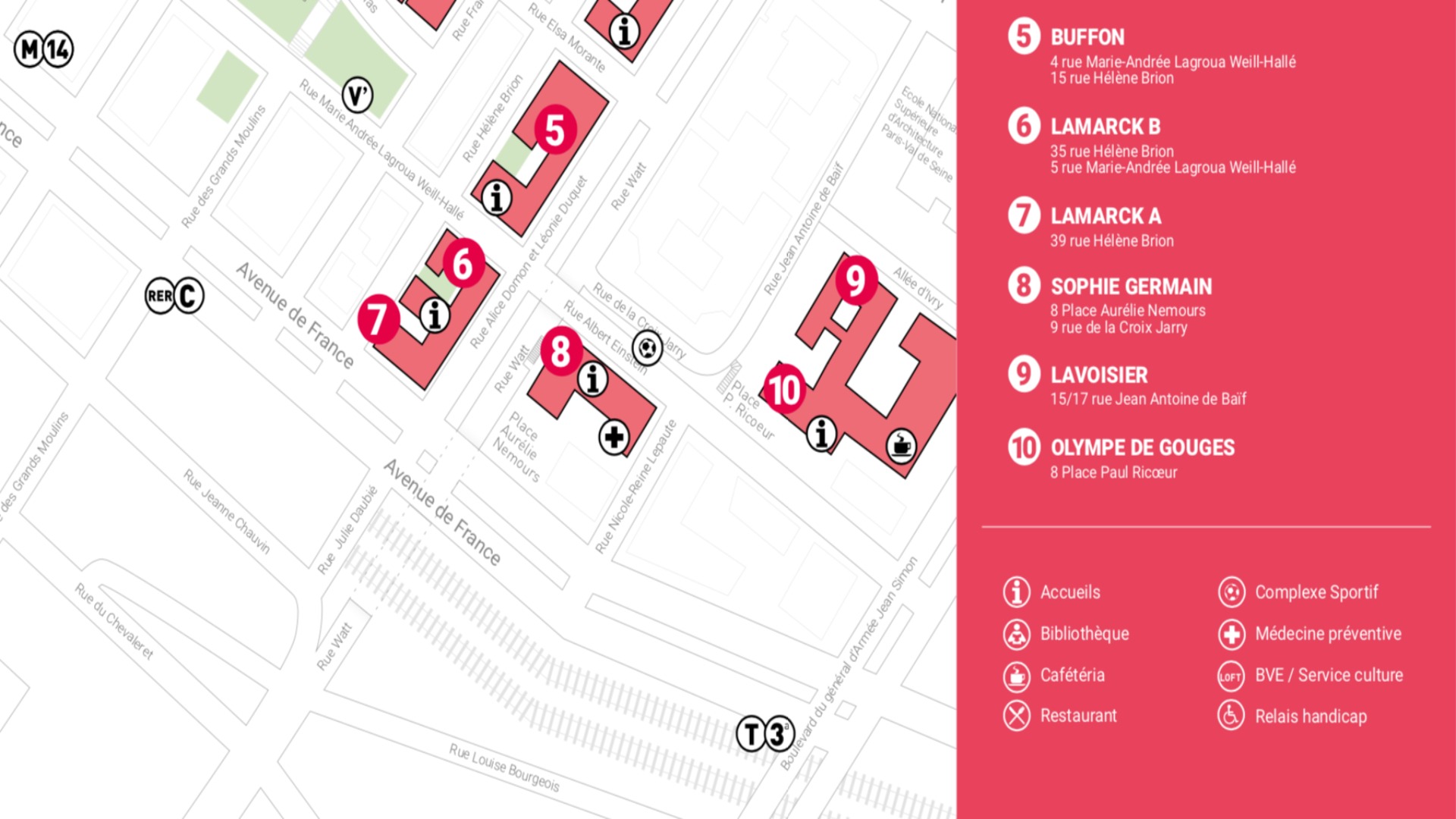

Bâtiment Olympe de Gouges

8 Place Paul Ricoeur

75013 PARIS

Accès au bâtiment Olympe de Gouges

This informal workshop is intended to discuss various approaches to probing audio LLMs such as Whisper (Radford et al., 2019) or Wav2vec (Baevski et al., 2018).

PROVISIONAL PROGRAMME

9h Nicolas Ballier & Guillaume Wisniewski (UPCité) : introduction

We briefly present current research in the making on speech language models.

Session 1 : speech variability and LLM response : some experiments and questions

9h 15 Tori (Georgina) Fullerton (UPCité) : How Whisper models respond to speech variability?

[This talk compares Whisper model transcriptions to human perception of VOT variation

and showcases research in the making on the detection of compact compounds in English.]

9h 40 discussion

10h Richard Wright (university of Washington), Nicolas Ballier & Philippe Martin (UPCité): resynthesing speech and analysing response to segmental and suprasegmental features

[This talk reports research in the making on vowel and pitch synthesis and how Whisper models respond]

10h15 discussion

10h 30 Behnoosh Namdarzadeh (UPCité) Whisper transcriptions for lesser-resourced languages : the case of Persian

[This talk reports our findings for the transcription task of a language trained with 24 hours of speech]

10h40 coffee break

Session 2 : Probing Speech Language Models : first experiments and results

11h00 Nicolas Ballier and Jean-Baptiste Yunès (UpCité) : a customised C++ implementation of Whisper to probe representations

[This talk presents our reverse engineering method based on the internal representations of the Whisper models.]

11h10 Hosein Mohebbi (Tilburg) : Adapting context mixing methods to explore speech transformers

[This talk presents the workflow used to analyse acoustic and linguistic representations in the EMNLP2023 paper.]

Mohebbi, H., Chrupała, G., Zuidema, W., & Alishahi, A. (2023). Homophone Disambiguation Reveals Patterns of Context Mixing in Speech Transformers. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing (pp. 8249-8260).

11h40 discussion

Session 3: So many parameters, so little time

12h00 round table

[We discuss some of the LLM parameters and methods to investigate audio LLMs. How to systematically investigate hallucinations and effects of language models?]

Antonio Balvet (Lille) : Prompt engineering for spoken transcriptions of French

Peter Uhrig (Erlangen) : Checking accuracy and investigating speed optimisation with Whisper

Alternates : Guillaume Wisniewski and the Deeptypo team (tbc) : playing with Wav2vec and raw signal

À lire aussi

JE – « Corpus d’apprenants / corpus d’experts : Quels enseignements pour la caractérisation du discours scientifique? »

Organisée dans le cadre du projet CarDiBiomed. PROGRAMME Salle 720, Bâtiment Olympe de Gouges 10h00-10h30 Accueil (café) 10h20-10h30 Natalie Kubler Université Paris Cité Ouverture de la J.E /Directrice CLILLAC-ARP 10h30-11h05 Magali Paquot ...

DLLA Closing event

30 November - 1st of December Deep learning for language assessment closing event rooms 715 (Th morning) and 720 Bâtiment Olympe de Gouges 8 Place Paul Ricoeur 75013 PARIS Accès au bâtiment Olympe de Gouges We take the opportunity of this closing event to present and...

ALOES 2024 Pre-conference Workshop

ALOES 2024 pre-conference workshop Pre-conference Workshop on Internet Spoken Corpora of English Thursday 28 March l 2024 Programme 14h 00 Opening session 1. Youtube scraping: three methods 14h 15 Adrien Méli the PEASYV pipeline 14h 45 Peter...

Rencontres des jeunes traductologues 2023

Traduction et interprétation : entre théorie et pratique 4 mai 2023, de 9h30 à 18h Bâtiment Olympe de Gouges, salle 720 Comité d'organisation : Maud Bénard, Marie Bouchet, Anastasia Buturlakina (Université Paris Cité); Bérengère Denizeau, Valentine Pieplu, Sara Salmi...